Ideas Validate Faster on Screen Than on Paper

Explaining an idea and thinking about a product are two different jobs.

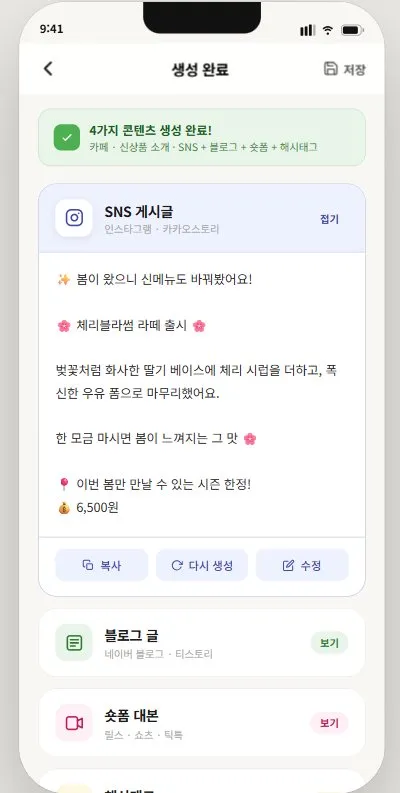

When I wrote the idea into a business plan application, the feature list grew naturally. Photo input, memo input, blog post generation, SNS copy generation, short-form script, hashtags. One sentence leads to “and it would also be nice if…” The stage of writing it out makes it hard to see what’s essential and what’s excess.

Moving it to a screen changed things.

The Screen Changed the Question

My first thought after generating the prototype with Claude Design was: “This is more than I expected.” Onboarding, dashboard, input, generating state, results, editing, templates, save confirmation. Walking through the flow, I could immediately see where a user might stop.

When I was writing it out, the question was “what features does it have?” After seeing the screens, the question became “will users know what to do here?” Same idea — different things became visible.

In a generative AI service, “what it generates” may be less important than it seems. If a user gets stuck on the first screen, they never reach the generation step. The energy required to start has to be low. That’s why onboarding was the first thing I cut in this prototype.

A Prototype Is a Thinking Tool, Not a Draft

Making v2 after seeing v1 was when the MVP scope became clear. The sequence matters. I didn’t define the scope and then build the screens — I saw the screens and then the scope became visible.

Features that were hard to remove when planning in writing were naturally filtered out in front of the screens. “Does this screen actually need to exist?” becomes an easy question to ask. The prototype became the tool for designing the MVP.

It wasn’t about knowing how to use the tool. The key was that having a screen makes more things judgeable.

What’s Still Open

Whether this prototype works for real small business owners — I still don’t know. Having screens isn’t the same as having validation. Whether it feels like a tool built for real marketing situations — not just a general AI tool — and whether the output is ready to use without editing: that can only be answered by trying it.

The move from idea to screen is done. Next is putting it in front of real users.

Core insight: Prototyping reveals what an idea actually needs — faster than any written spec. What was missing: A way to see where users would stop, before any real building begins. What changes now: Scope decisions come after seeing the screen, not before.

Reader question: When you moved an idea from a written spec to an actual screen, what changed most?